This year I got to know SAP fairly intimately, looking at it and into it from a test automation perspective, inventorising the possibilities and opportunities of automated testing of a (huge) SAP implementation. During this time I ran into a fair amount of SAP related people, ranging from SAP consultants and sales people to ABAP-developers, HP sales people and SAP preferred suppliers. They all are making it seem as though SAP development and testing is a different world, nothing to do with the “normal” software development world. In my view this is wrong, SAP is just software. Yes, it has a bunch of particularities which you do not get in so many other packages, but in terms of the actual functionality it is fairly comparable to Siebel and Oracle (no, I am NOT saying it’s the same, I am merely saying it is comparable). With neither Oracle nor Siebel this almost religious separatism exists, yet they too are bound by the laws of business process models, transaction codes and what not. So how come SAP is seen as so special and the others are not? Is SAP special?

This year I got to know SAP fairly intimately, looking at it and into it from a test automation perspective, inventorising the possibilities and opportunities of automated testing of a (huge) SAP implementation. During this time I ran into a fair amount of SAP related people, ranging from SAP consultants and sales people to ABAP-developers, HP sales people and SAP preferred suppliers. They all are making it seem as though SAP development and testing is a different world, nothing to do with the “normal” software development world. In my view this is wrong, SAP is just software. Yes, it has a bunch of particularities which you do not get in so many other packages, but in terms of the actual functionality it is fairly comparable to Siebel and Oracle (no, I am NOT saying it’s the same, I am merely saying it is comparable). With neither Oracle nor Siebel this almost religious separatism exists, yet they too are bound by the laws of business process models, transaction codes and what not. So how come SAP is seen as so special and the others are not? Is SAP special?  When you start talking about test automation and SAP the first things that pop up are some SAP proprietary names such as CATT, eCATT and SAP TAO. Fortunately SAP themselves recommend against the use of either CATT or eCATT, so let’s dismiss these right here and now, they are tools that once were somewhat helpful but now should be considered redundant for most SAP implementations. SAP TAO however is of a different breed. SAP TAO is pushed by SAP as being the solution to use when trying to automate your testing. One minor issue with SAP TAO however is that it does not really automate anything on its own, you invariably need HP Quality Center (HPQC) and Quick Test Professional (QTP) with it. HP tooling has some tailor-made solutions to integrate well with SAP TAO and more specifically with the SAP Solution Manager. The setup as proposed in this picture is the ideal picture as SAP would like to envision and implement a SAP testing solution. However, not all organisations have Solution Manager up and running for anything other than transport and low level reporting, nor do all organisations have the budget for the HP tool set. When working with SAP TAO effectively and efficiently, the Business Blueprint, the description of all business processes as used by the organisation with the SAP systems, should be residing in the SAP Solution Manager. This blueprint should be maintained carefully and always be up to date. When changes to the system are made, either by updates to the system or by customizations in ABAP, these changes should be visible in the Solution Manager, ensuring the SAP Solution Manager Business Process Change Analyzer can identify which processes have changed and based on this impact analysis propose tests within HP Quality Center to be executed. With SAP TAO the testers can “automate” the tests, which effectively means record the steps. SAP TAO then adds some secret sauce by cutting longer scripts up into maintainable and reusable chunks. These scripts will then be sent from SAP TAO into HPQC, where they can be associated with functional test descriptions. When a tester now wants to run one of the automated tests, or for that matter wants to run the entire automated suite, HPQC is used again to trigger the scripts, which get executed with QTP. In other words, the actual testdriver is QTP, not SAP TAO. When starting up a SAP GUI instance and analyzing it with something like UISpy or some other tool which can show the objects on a screen, the fields and buttons are barely visible and not really open to test automation. Yet it is possible. If SAP is configured to enable scripting, the UI objects become accessible and thus the GUI is scriptable with any tool of your choice. The moment this little flag has been set, a whole new world opens up in the GUI, it’s all of a sudden open, the fields, screens and buttons all have an ID and can be hooked into by a driver of your choice. Effectively what the enable scripting setting does, is ensuring non of the huge, expensive tools mentioned above are needed, it is possible to run through the application with any driver you want. The main thing needed in order to properly and solidly automate testing in SAP now, is a well grounded knowledge of the Business Processes the implementation is supporting (or driving). This is no different than what is needed when automating SAP with SAP TAO.

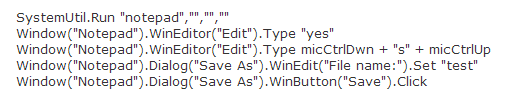

When you start talking about test automation and SAP the first things that pop up are some SAP proprietary names such as CATT, eCATT and SAP TAO. Fortunately SAP themselves recommend against the use of either CATT or eCATT, so let’s dismiss these right here and now, they are tools that once were somewhat helpful but now should be considered redundant for most SAP implementations. SAP TAO however is of a different breed. SAP TAO is pushed by SAP as being the solution to use when trying to automate your testing. One minor issue with SAP TAO however is that it does not really automate anything on its own, you invariably need HP Quality Center (HPQC) and Quick Test Professional (QTP) with it. HP tooling has some tailor-made solutions to integrate well with SAP TAO and more specifically with the SAP Solution Manager. The setup as proposed in this picture is the ideal picture as SAP would like to envision and implement a SAP testing solution. However, not all organisations have Solution Manager up and running for anything other than transport and low level reporting, nor do all organisations have the budget for the HP tool set. When working with SAP TAO effectively and efficiently, the Business Blueprint, the description of all business processes as used by the organisation with the SAP systems, should be residing in the SAP Solution Manager. This blueprint should be maintained carefully and always be up to date. When changes to the system are made, either by updates to the system or by customizations in ABAP, these changes should be visible in the Solution Manager, ensuring the SAP Solution Manager Business Process Change Analyzer can identify which processes have changed and based on this impact analysis propose tests within HP Quality Center to be executed. With SAP TAO the testers can “automate” the tests, which effectively means record the steps. SAP TAO then adds some secret sauce by cutting longer scripts up into maintainable and reusable chunks. These scripts will then be sent from SAP TAO into HPQC, where they can be associated with functional test descriptions. When a tester now wants to run one of the automated tests, or for that matter wants to run the entire automated suite, HPQC is used again to trigger the scripts, which get executed with QTP. In other words, the actual testdriver is QTP, not SAP TAO. When starting up a SAP GUI instance and analyzing it with something like UISpy or some other tool which can show the objects on a screen, the fields and buttons are barely visible and not really open to test automation. Yet it is possible. If SAP is configured to enable scripting, the UI objects become accessible and thus the GUI is scriptable with any tool of your choice. The moment this little flag has been set, a whole new world opens up in the GUI, it’s all of a sudden open, the fields, screens and buttons all have an ID and can be hooked into by a driver of your choice. Effectively what the enable scripting setting does, is ensuring non of the huge, expensive tools mentioned above are needed, it is possible to run through the application with any driver you want. The main thing needed in order to properly and solidly automate testing in SAP now, is a well grounded knowledge of the Business Processes the implementation is supporting (or driving). This is no different than what is needed when automating SAP with SAP TAO.  The benefits of having the option to choose your own drivers, your own programming language and your own reporting framework are huge. If SAP is merely in the organisation to support the business processes and software developers within the organisation are writing their own code in Erlang, C++, C#, Java, Ruby, Python or whatever else you can imagine, the testsuite for SAP can be in that same language. Having the automated testsuite in a well supported language rather than just in QTP’s own VBScript, ensures a larger possible support base for the automated tests. It enables easy integration of home-built software with the SAP systems since all tests can be built in one language and in an end-to-end setup, again supported by the organisation’s own development group. The SAP TAO and HPQC setup do have some benefits of course. First of all, there is a huge corporate support for both HP and SAP software products. But more importantly, there are some technical benefits of using SAP TAO, if the environment is setup properly. As mentioned above, there is this tool called the Business Process Change Analyser, or BPCA, which can help extract transaction based changes from a transport and help the tester decide, based on these changes, which test scenarios need to be run to effectively cover the business processes (or mainly the transactions associated both directly and indirectly to the transport). Next to that there is the benefit of using HPQC, I can hardly believe that I am saying this, since I am personally not a big fan of the HPQC suite, however the reporting possibilities and capabilities within HPQC are close to limitless. This means that it is possible to generate excellent reports, automatically, for both management level execs and for the business analysts and ABAP-specialists, on each test run without having to think about it. Having the full benefits of this setup however comes at a cost, a fairly sizable cost. The licensing for HPQC, QTP and SAP TAO or not to be ignored for starters. A hidden cost lays within the organisation, as stated, for the BPCA to do anything, Solution Manager needs to be utilized fully, the Blueprint needs to be ready and up to date, more over, it needs to be well maintained to ensure it remains the “Single Source of Truth” (as SAP coined it). So, to answer the initial question: Is SAP special? It is, as a business process tool, definitely special, strong and extremely versatile. When looking at SAP as a system that requires testing and test automation however, I am not convinced it is special, it’s just software, which is open for testautomation with a range of drivers, one of these drivers might be QTP. If you do indeed choose to go for QTP with a SAP system, have a look into SAP TAO. However, do not feel that it is the only one out there which can effectively and efficiently be used for SAP test automation. All the others claiming they can, probably indeed can just as well as SAP TAO with QTP. In the end it is all about how you use and abuse a tool and whether you use QTP, White or Panaya, they all in the end merely function as a driver, it is the code the testers build which matters!

The benefits of having the option to choose your own drivers, your own programming language and your own reporting framework are huge. If SAP is merely in the organisation to support the business processes and software developers within the organisation are writing their own code in Erlang, C++, C#, Java, Ruby, Python or whatever else you can imagine, the testsuite for SAP can be in that same language. Having the automated testsuite in a well supported language rather than just in QTP’s own VBScript, ensures a larger possible support base for the automated tests. It enables easy integration of home-built software with the SAP systems since all tests can be built in one language and in an end-to-end setup, again supported by the organisation’s own development group. The SAP TAO and HPQC setup do have some benefits of course. First of all, there is a huge corporate support for both HP and SAP software products. But more importantly, there are some technical benefits of using SAP TAO, if the environment is setup properly. As mentioned above, there is this tool called the Business Process Change Analyser, or BPCA, which can help extract transaction based changes from a transport and help the tester decide, based on these changes, which test scenarios need to be run to effectively cover the business processes (or mainly the transactions associated both directly and indirectly to the transport). Next to that there is the benefit of using HPQC, I can hardly believe that I am saying this, since I am personally not a big fan of the HPQC suite, however the reporting possibilities and capabilities within HPQC are close to limitless. This means that it is possible to generate excellent reports, automatically, for both management level execs and for the business analysts and ABAP-specialists, on each test run without having to think about it. Having the full benefits of this setup however comes at a cost, a fairly sizable cost. The licensing for HPQC, QTP and SAP TAO or not to be ignored for starters. A hidden cost lays within the organisation, as stated, for the BPCA to do anything, Solution Manager needs to be utilized fully, the Blueprint needs to be ready and up to date, more over, it needs to be well maintained to ensure it remains the “Single Source of Truth” (as SAP coined it). So, to answer the initial question: Is SAP special? It is, as a business process tool, definitely special, strong and extremely versatile. When looking at SAP as a system that requires testing and test automation however, I am not convinced it is special, it’s just software, which is open for testautomation with a range of drivers, one of these drivers might be QTP. If you do indeed choose to go for QTP with a SAP system, have a look into SAP TAO. However, do not feel that it is the only one out there which can effectively and efficiently be used for SAP test automation. All the others claiming they can, probably indeed can just as well as SAP TAO with QTP. In the end it is all about how you use and abuse a tool and whether you use QTP, White or Panaya, they all in the end merely function as a driver, it is the code the testers build which matters!

Tag Archives: testing

The cost of test automation

Over the past few posts I have written a lot about test automation, however one very important subject I have left out thus far. What is the actual cost of test automation? How do you calculate the cost of test automation, how do you compare this cost to the overall costs of testing? In other words, how do you get to the return on investment people are looking for? First thing that needs to be covered if you want to know and understand the costs of test automation is a CLEAR understanding of the goal. Typically there are three possible goals:

- reduce the cost of testing

- reduce the time spent on testing

- improve the quality of the software

These three have a direct relation with each other, and thus each of them also has a direct impact on the other two depending on which one you take as your main focus. In the next few paragraphs I will discuss the impact of picking one of the three as a goal.

Reduce the cost of testing

When putting the focus of your test automation on reducing the overall cost of testing you set yourself up for a long road ahead. There generally is an initial investment needed for test automation to become cost reducing. Put simple, you need to go through the initial implementation of a tool or framework. Get to know this tool well and ensure that the testers who need to work with the tool all know and understand how to work with it as well. If going for a big commercial tool there is of course the investment in purchasing the license, which often ranges between 5.000 and 10.000 euros per seat (floating or dedicated). Assuming more than one test engineer will need to be using the tool concurrently, you will always need more than one license. This license cost needs to be earned back by an overall reduction in testing costs (since that is your goal). An average investment graph will look something like this when working with a commercial tool:

The initial investment is high, this is the cost of the licenses. At that point there is nothing yet, no tests have been automated yet and you have already spent a small fortune. The costs will not drop immediately, since after the purchase the tool needs to be installed, people need to be trained etc. Next to it, all the while no tests have been automated, thus the cost is high, but the return is zero. Once the training process has been finilised and the implementation of the automated tests has started the cost line will slowly drop to a stable flatline. The flatline will be the running cost of test automation, which includes the cost of maintenance of the tool and the testscripts and of course the cost of running tests and reviewing and interpreting the reports.

A particular post in a LinkedIn group with the ominous question “Who’s afraid of test automation”, one of the more disturbing responses was as follows (and I am quoting from the group literally, with a changed name however):

Q: Who’s afraid of test automation?

A: Anyone with headcount. What would it look like if all of the testing is done by machine and there is only one person left in the organization?

Respectfully, Louise, PhD

The idea that test automation will take away testers’ work completely and as such will reduce the running costs of a test team drastically is a common misconception which takes too much time and effort right now to address. But in short, Test automation may reduce some of the workload, but will not be able to reduce your cost of testing by removing all of the testers, nor should this ever be your objective.

In my next blog post I will continue this story, then with the focus on “Reduce the time spent on testing”

Is testing the dumping grounds of IT?

The other day I was talking to a few developers I was on an assignment with about getting testers added to their scrum team, and the response I got from them disturbed me. They told me that in their experience most testers do not work together in the team, they work against development, trying to get everything fully tested, despite them knowing this is not a feasible thing, and with that delay projects. On top of that they told me, most of the testers they have worked with, are part of the dumping grounds of the IT industry. And with that they meant that in their view most testers are not good enough to be a developer, so they decided to become testers instead (<sarcasm> cause, come on, testing is not that difficult anyone can do that! <\sarcasm>).

I was shocked to hear there are still a lot of developers out there who believe that testers are the dumping grounds of the IT industry, but I was even more shocked of their experiences with testers, working in an “us versus them” modus operandi instead of working in a team, part of a joing effort with a shared focus and goal.

What is it that still makes testers often work against developers instead of with them?

Most testers I have worked with over the past years agree that working side by side with development is the most effective and efficient way of working, this way you both keep track of your joint goal: get the software out on time, on budget and according to what your customer (or end-user for that matter) wants and needs. Together you try to add value to the software.

So is it indeed true that there are still a lot of testers out there in the field who are indeed not seeing the big picture and are trying to prove their worth by working against dev and looking for bugs that are not relevant, e.g. just looking for bugs for the sake of finding one, no matter what the value of that bug is to the end-user/customer, just so they can triumphantly point to a developer that indeed, “see! There are bugs in your code, you did it wrong!” Unfortunately I fear there are still too many testers out there that think and work this way, not to even mention all the developers out there that seem to not understand the added value of a good tester to the team and to the developers work!

Fortunately there is a wonderful contrast out there as well, in the form of this blog post by Nathan Lusher who shows that there indeed are good testers out there, who weigh in on a project and prove the value of testing and with that show that testers are not (or at least not everywhere) the dumping grounds of IT.

In my experience, there are a lot of very good, inspired and knowledgable testers out there, who see the added value of working together, in a team with a shared goal, a shared approach and shared respect. If testers want to get the respect of developers, I believe it is up to quite a lot of the testers to start by showing respect to the developers and where needed, increasing their technical knowledge in order to be able to counterbalance a developers viewpoint. You get what you give!

Are we building shelf-ware or a useful test automation tool?

Frustration and astonishment inspired this post. There currently is a big regression testing cycle going on within the organization, over the past 4 months we have worked hard with testers to establish a sizable base of automated tests, however the moment regression started everyone seemed to drop the automation tools and revert back into what they have always done: open excel and check the check-boxes of the scripted tests.

Considering that we have already setup a solid base with a custom fixture enabling the tests, or checks if you will, to do exactly what the tester wants them to do and do what a tester would do manually whilst following the prescribed scripts, and having written out, in FitNesse, a fair share of these prescribed scripts, what is stopping them from using this setup?

Are we automating for the sake of automating?

While working on this, extremely flexible, setup with FitNesse and Selenium WebDriver and White as the drivers I have started wondering more and more why we are automating in this organization. The people responsible for testing do not seem to be picking up on the concept of test automation, they are all stating loudly that it is needed and that it is great that we are doing it, but when regression starts they immediately go back to manual checks. I say manual checks on purpose since the majority of testing here is done fully scripted, most of these scripts do not leave anything to the testers imagination, resulting in these tests being checks rather than tests. Checks we can execute automatically, repeatedly and consistently with tools such as FitNesse.

How do you make testers aware that a lot of the scripted tests should not be done manually?

Let me be clear on this, I am a firm believer in both manual and automated testing. They both have their value and should be used together, automated testing is not here to take away the manual testing, rather it is here to support the testers in their work. Automated testing should be complimentary to manual testing. Thus far in this organization, I have seen manual testing happening and I have seen (and experienced) a lot of effort being put into writing out the automated tests in FitNesse. However there has not been a clear cooperation between the two, despite the people writing the automated tests being the same individuals who also are responsible for executing the manual tests (which they have rewritten into FitNesse in order to build automated tests).

We have tried coaching on the job, we have tried dojos, but alas, I still see a hell of a lot of manual checks happening instead of FitNesse doing these checks for them. What is it that makes people not realize the potential of an automation tool? Thus far I have come up with several possible causes

- In our test-dojos we mainly focused on how to write tests in FitNesse rather than focusing on what you can achieve with test automation. This has led me to the idea that we rapidly need to organize another workshop or dojo in which the focus should be on what the advantages of automated tests are.

- Another reason could be that test automation was not initiated by this team, it was put upon this team as a responsibility. The team we are currently creating this fixture for is a typical end-of-the-line-bottom-of-the-testing-chain team, everything they get to test is thrown over a wall and left to them to see if it works appropriately. Most of them do not seem to have consciously chosen to be testers, instead they have accidentally rolled into the software testing field. Some of them have adapted very well to this and clearly show affinity and aptitude for testing, others however would, in my opinion, be better of choosing a different occupation. It is exactly the latter group that needs to be pulling this test automation effort currently going on.

So what will make people use automation tools properly?

The moment I can answer this one in a general rule-of-thumb I will sell it to the highest bidder. For within this organization however there doesn’t really seem to be a simple solution just yet. As I have written before, there is not yet one sole ambassador for test automation in this organisation. Even if there is, we will need to cause a shift in the general mindset of the testers. Rather than just walking through their predefined set of instructions in excel, they need to consider for themselves what has already gotten covered in the automated tests, how can I supplement these tests with manual testing?

We will need to find a way to get the testers to step out of their comfort-zone and learn how to utilize tools other than Excel and MS Word. Maybe organizing a testing competition will work, see who can cover the most tests in the shortest time and with the highest accuracy?

I am not a great believer in measuring things in testing, but maybe inventing some nice measurements will help the testers see the light. For example “How often can you test the same flow with different input in a certain timeframe?”.

Did we build shelf-ware or did we add value to the testing chain?

At the moment I often ask myself whether I am building shelf-ware or actually am building a useful automation tool (trying to stay away from terms like framework, since that might only increase the distance between the tool and the testers). Whenever I play around with the FitNesse/WebDriver/White setup we currently have running I see an incredibly versatile test automation tool which can be used to make life a lot easier for those who have to test the software regularly and repeatedly (not just testers, but also developers, product owners etc. can easily use this setup).

It is completely environment agnostic, if needed we can (and have in the past) run the same tests we run in a test environment also in production. It is easy to build new test cases/scripts or scenarios (I seem to have lost track what would be the safe option here to choose, they all have their own subconscious connotations) since it is a wiki. All tests are human readable, if you can read an excel sheet, reading the tests in FitNesse with Slim the way we built it, should be child-play.

Despite all these great advantages, the people that should be using it are not.

Reading all this back makes me consider one more thing; we started off building this setup with these tools based on a request from higher management. The tool selection was done by the managers (or team leads if you will) and not by the team themselves. Did we miss out on the one thing the IT industry has taught us? Did we build something we all want, but not what our customer wants and needs? I hope not, for one thing, I am quite sure this is what they need, an easy to use tool to automate all tedious, repetitive check work.

Question that remains: is this what our customer, or to be more exact, our customers’ end user, the tester, wants?