Tip 8 – Generating a specific amount of hits per second

JMeter is generally oriented towards a performance test approach where the load is based on a specific set of concurrent users, or threads. When talking metrics with systems engineers however, you will generally hear something more towards hits per second, requests per second or transactions per second. So how do you get JMeter to generate a certain amount of hits per second?

There are of course several ways to go about this, but in this article I will limit myself to a fairly simple method, using a Timer.

Constant Throughput Timer

The Constant Throughput Timer can be very useful in generating a (surprise!!) constant throughput.

What this timer does, is make sure that, regardless of the amount of threads you have started, the test will pause whenever needed to throttle the amount of requests per second. It is good to note by the way, that the timer is NOT based on milliseconds or seconds, but instead is counting per minute.

When your requirements state that the application (and server) should manage to survive some 60 hits/second, you will need to calculate your hits per second back to the actual amount of hits per minute (e.g. 10 hits/second * 60 seconds = 600 hits/min).

Keep in mind that there may be a difference in your load requirements, you have to really dig up from your customer/product owner or whoever came up with the performance requirements what exactly they expect. When they define hits per second, what do they mean with that? is that pageviews or is that actual requests (e.g. 1 page can consist of more requests for HTML, CSS, JS, Images etc.). Always verify and double check that what you mean with hits per second or requests per second is indeed what they also mean!

Understanding the “Calculate Throughput based on” variable

There are several ways the throughput can be calculated and enforced. The default setting is “this thread only“, in my eyes however the most logical setting (based on the above requirements) is the “all active threads” setting.

- this thread only – each thread, as defined in your Thread Group thread properties, will try to stick to the target throughput. This means that when you have 150 threads, your throughput will be 150 * Target throughput.

- all active threads in current thread group – the target throughput is divided across the active threads in the thread group. In other words, this will give you the actual target throughput as you have configured. This throughput is for this specific thread group only! Threads themselves are delayed and started based on when this particular thread last ran. e.g.

- all active threads – When you have more than one thread group, this setting becomes interesting. This will divide the target throughput across all active threads in all Thread Groups. Be aware, each Thread Group requires a Constant Throughput timer with the same settings for this to work.

- all active threads in current thread group (shared) – Each thread is delayed based on when any thread in the group last ran, meaning the threads run consecutively rather than concurrently. For the rest this setting does exactly the same as the “all active threads in current threadgroup”, e.g. this will give you the actual target throughput as you have configured.

- all active threads (shared) – Each thread is delayed based on when any thread in the group last ran, meaning the threads run consecutively rather than concurrently. Any thread here has again a wider meaning than in the previous setting, this setting runs across all threads and thread groups you have configured.

How do you know which setting you need?

These different settings can be quite confusing to any Jmeter user, even to experienced users. I would therefore recommend the following:

Make sure you put the constant throughput timer in the root of your testplan (e.g. at the highest level) and let it dictate the throughput of all of your threads and thread groups, e.g. “all active threads“. That way you know for sure what the actual throughput if your test is.

In the case of a somewhat complex environment, where you have several thread groups with each different amounts of requests per second, make sure you set the timer within the root of that particular thread group and stick to the “all threads in current thread group“.

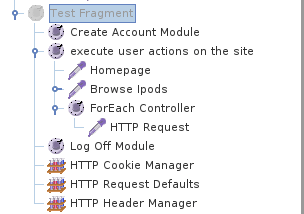

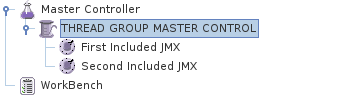

You can now easily control several scripts, from within one or more thread groups within 1 Jmeter instance while still keeping things maintainable and reusable.

You can now easily control several scripts, from within one or more thread groups within 1 Jmeter instance while still keeping things maintainable and reusable.

In order to run browser-based tests from JMeter I use the wonderful work of the people over at

In order to run browser-based tests from JMeter I use the wonderful work of the people over at